One 🤖 is good, but 🤖🤖 from different vendors is better. How to make Claude and Codex argue with each other.

Recently OpenAI released an open-source plugin that gives Claude Code a structured integration with Codex. Everything works right from VS Code via the Claude Code Extension. In my experience, even on tasks unrelated to code, two “AI heads” produce better results than one. A single AI has no incentive to challenge its own conclusions, and it is limited by its training conditions. But managing the interaction between two AIs used to be uncomfortable. The new plugin made it easier, and with additional skills for Claude Code - even more so. Below are the skills that turn AI advisors into structured opponents.

Before I figured out how to use the plugin effectively, I was getting conversations like this:

- Me: Feel free to call Codex for a second opinion if needed.

- Claude: I won't call Codex - I have enough material for a confident decision.Fair enough - the task wasn’t framed as a task. What I actually needed was to write interaction protocols. After experimenting, I arrived at three scenarios where debates produce a tangible result. Each became a separate skill for Claude Code. All three skills can save a visible debate transcript - arguments, critiques, outcomes, and conclusions - to a Markdown log file, so you can revisit the results at any time.

/options-challenge - when you need to choose between alternatives. You’re at a fork: which framework to pick, how to design an API, which strategy to choose. Claude formulates 2-4 options with arguments for each. Codex receives the same options and attacks each one: what’s the strength, what’s the main risk, what has to be true for the option to work. Claude incorporates the critique and ranks the options from strongest to weakest. Codex pressure-tests the top two - and you get a decision map with explicit tradeoffs.

Example: I needed to add links to LinkedIn, GitHub, and Telegram to my site without turning the top bar into an overloaded mix of navigation and external links. Claude proposed several options: keep social links in the top menu, move them to the footer, hide them in a dropdown, or place them on the About page and at the end of posts. Codex filtered out solutions that work poorly on mobile or add unnecessary complexity. The result was a clean menu, social links in the footer, and an additional contact block where the reader is actually ready to click.

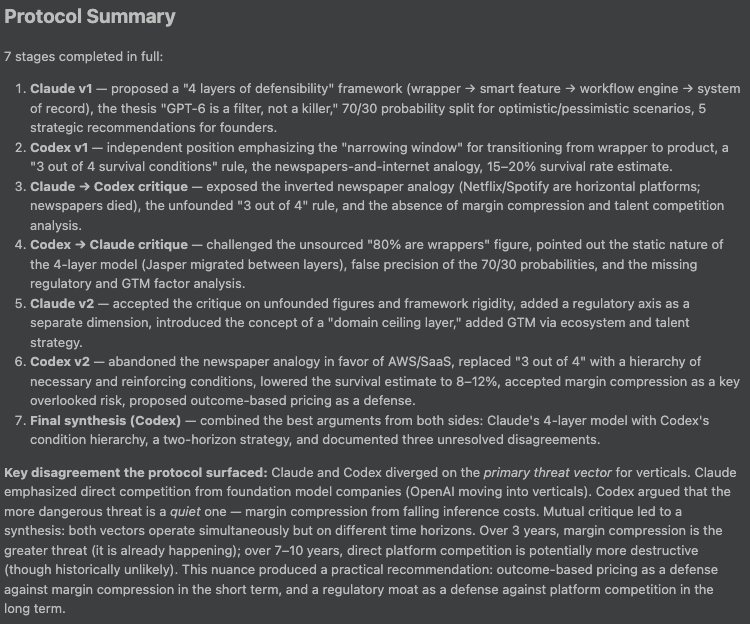

/strategy-debate - when you need deep analysis. Both models independently formulate a position on the question, then in rounds of cross-critique they attack each other’s weak points and revise their arguments. The format is tougher: each iteration requires specific counterarguments with reasoning, not vague phrases like “it’s also worth considering.” At the end, a finalizer of your choice (Codex or Claude) summarizes the outcome. Works for business strategies, architectural decisions, project planning.

Example: a LinkedIn post by an investor - “I passed on 473 AI startups. None had actual moats” - claims that most AI startups remain thin wrappers over APIs and lists four sources of defensibility. I took this thesis as a starting point: is this set of criteria enough to separate a temporary layer from a real product? Claude and Codex proposed their own analytical frameworks, and during the cross-critique phase they discovered that defensibility can’t be described on a single linear scale because the strength of each barrier depends on the industry. Ultimately the debate arrived at a practical framework: what exactly in a product will remain valuable after the next leap in base model quality.

/creator-critic - when you need to generate and filter for the best. One model (creator) generates 3-5 ideas or approaches, the other (critic) dissects each one: what’s the value, what’s the main flaw, what hidden assumption exists, how it could fail. Then the creator revises the list, drops weak options, and narrows down to the 1-3 strongest. The result is not a “first draft” but a filtered recommendation.

Example: suppose a team is choosing a name for a new internal tool that might later become an external product. Claude generated options, Codex rejected the generic, ambiguous, and poorly scalable ones. The result was 2-3 names worth carrying forward.

The key point here is cross-vendor. These aren’t two instances of the same model sharing the same blind spots. Claude from Anthropic and Codex from OpenAI are trained on different data, by different teams, with different priorities. Their blind spots are different - and a structured debate surfaces them.

This doesn’t mean that debate within a single provider is pointless. You can dynamically spin up multiple agents with different archetypes, and that approach wins on simplicity, launch speed, and not needing a second setup. But it optimizes role diversity within one model family. My approach optimizes something else: the collision of different model priors and different RLHF preferences. For some tasks the first mode is enough; for ambiguous decisions I deliberately chose the second.

Debates don’t make sense when:

- there’s only one correct answer.

- the answer is trivial. Debates burn quota on both AIs.

- you need a quick answer, since debates can take several minutes.

What you need to get started:

- Claude Code

- Codex CLI -

npm install -g @openai/codex, thencodex login - Codex plugin for Claude Code - installed from within Claude Code

- Skills from the repository - symlinked into

~/.claude/skills/

Repository: github.com/biyachuev/claude-debate-skills

If you try it - let me know how it goes. I’m especially interested in cases where the debate produced an unexpected result.